To be honest, I spent most of my first quarter of graduate school on classes, seminars, and getting adjusted to the new environment. However, I did start attending research meetings in a group I am interested in, and I have some ideas for a potential project. I am very excited about beginning this project, and I hope that this coming quarter, I will be able to make more progress. Luckily, there is a postdoc in the group who is also excited about it, and he has been very thorough in providing me with papers to read and feedback on my work. I will begin to describe my progress briefly.

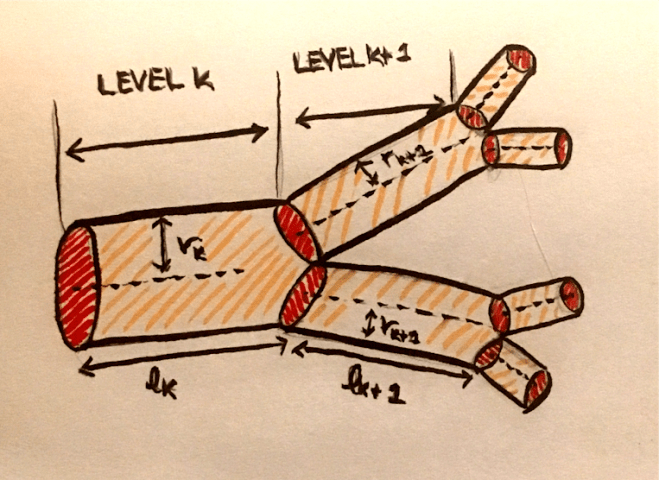

The group I have been working in studies a wide range of systems such as predator-prey dynamics, multi-drug interaction, the relationship between sleep and metabolic rate, and cardiovascular networks. Since there are so many diverse projects happening in our group, our group meetings are split by topic. The sub-group I joined focuses on networks. So far, they have been mostly focusing on cardiovascular networks. They develop models that describe these networks, such as the scaling laws that describe changes in the radius and length of vessels across levels of the network. Then, they test these models against data extracted from 3D images.

Since my primary interest in biology is in neuroscience, I approached the group to find out if there were any projects in neuroscience. The PI told me that although there are currently no projects in neuroscience in this group, there are mathematical similarities between neuronal networks and cardiovascular networks, and he saw a future in extending the image analysis of cardiovascular networks to neurons.

We can think of a network of neurons like the cardiovascular system, a resource distribution network that is subject to biological and physical constraints. Deriving a power law relationship between radius and length of successive levels of a vascular network relies on minimizing the power lost due to dissipation while maintaining the assumptions that the network is of a fixed size, a fixed volume, and space filling. This calculation is carried out using the method of Lagrange multipliers, and assuming that the flow rate is constant. The power loss due to dissipation in the cardiovascular network is $P = \dot{Q_0}^2 Z_{net}$, where $\dot{Q_0}$ is the volume flow rate of blood and $Z_{net}$ is the resistance to blood flow in the network. For a neuronal network, we will use an analogous equation, $P = I_0^2 R_{net}$, where $I_0$ is the current, and $R_{net}$ is the resistance to current flow in the network. We will carry out the Lagrange multiplier calculations in a similar fashion to the calculations for cardiovascular networks.

For cardiovascular networks, we use the Poiseuille formula for resistance, which is the hydrodynamic resistance to blood flow in the network. According to this formula, the impedance at a level k in the network is given by $Z_k = \frac{8 \mu l_k}{\pi r_k^4}$. We can reduce $\frac{8 \mu}{\pi}$ to a single constant C, so this is equivalent to $Cl_k r_k^{-4}$. Thus, the resistance is proportional to the product of some powers of the length and the radius. If we want to consider a general formula for the resistance, we can consider a formula with powers p and q of of length and radius respectively. That is, our resistance formula at level k is $R_k = \Tilde{C} l_k^p r_k^q$.

We define the objective function as follows:

\[P = I_0^2 R_{net} + \lambda V + \lambda_M M + \sum_{k=0}^{N} \lambda_k n^k l_k^3 \]

This objective function arises from the fact we want to minimize power loss, the first term, while imposing the three constraints that correspond to the last three terms: size, volume, and space filling. Each constraint corresponds to a Lagrange multiplier. The last constraint comes from the fact that a resource distribution network must feed every cell in the body. This, each branch at the end of the network feeds a group of cells called the service volume, $v_N$, where N is the terminal level, and the number of vessels at that level is $N_N$, so the total volume of living tissue is $V_{tot} = N_N v_N$. If we assume that this argument holds over all network levels, we have $N_N v_N = N_{N-1} v_{N-1} = … = N_0 v_0$. We assume that the service volumes vary in proportion to $l_k^3$, so the total volume is proportional to $N_kl_k^3$. Our objective function has N terms related to space filling, since the space filling constraint must be satisfied at each level k. We assume that the branching ratio is constant, so the number of vessels at level k is $n^k$. We can define the volume as $\sum_{k=0}^N N_k \pi r_k^2 l_k$.

Note that we are defining the constraints the same we we did for vascular networks, but it is unclear whether these assumptions are accurate for neuronal networks. However, for the sake at arriving at a preliminary theoretical result for the scaling of neuronal networks, we will keep constraints.

The total resistance at each level is the resistance for a single vessel divided by the total number of vessels, that is, $R_{k, tot} = \frac{\Tilde{C} l_k^p r_k^q}{n^k}$. The net resistance of the network is the sum of the resistances at each level, so $R_{net} = \sum_{k = 0}^N \frac{\Tilde{C} l_k^p r_k^q}{n^k}$. If we define new Lagrange multipliers, $\lambda’ = \pi \lambda$, we can rewrite the objective function as follows:

\[P = I_0^2 \sum_{k = 0}^N \frac{\Tilde{C} l_k^p r_k^q}{n^k} + \lambda’ \sum_{k=0}^N n^k r_k^2 l_k + \lambda’_M M + \sum_{k=0}^{N} \lambda’_k n^k l_k^3 \]

To normalize further, we can divide by the constant $I_0^2\Tilde{C}$, since the current is constant, and absorbing this constant into new definitions of the Lagrange multipliers, we get:

\[P = \sum_{k = 0}^N \frac{l_k^p r_k^q}{n^k} + \Tilde{\lambda} \sum_{k=0}^N n^k r_k^2 l_k + \Tilde{\lambda}_M M + \sum_{k=0}^{N} \Tilde{\lambda}_k n^k l_k^3 \]

To find the radius scaling ratio, we will minimize P with respect to $r^k$, at an arbitrary level k, and set the result to 0. Thus, we can find a formula for a Lagrange multiplier and derive the scaling law.

So we have:

\[\frac{dP}{dr_k} = \frac{l_k^p qr_k^{q-1}}{n^k} + 2 \Tilde{\lambda} n^k r_k l_k = 0 \]

Solving for the Lagrange multiplier, we have:

\[\Tilde{\lambda} = -\frac{qr_k^{q-1}l_k^p}{2n^{2k} r_k l_k} = \frac{\frac{-q}{2}}{n^{2k}l_k^{1-p}r_k^{2-q}}\]

Since this is a constant, the denominator must be constant across levels. So

\[\frac{n^{2(k+1)}l_{k+1}^{1-p}r_{k+1}^{2-q}}{n^{2k}l_{k}^{1-p}r_{k}^{2-q}} = 1\]

It is useful to consider the case where the resistance is related to the length linearly, that is, for p =1. Thus, we obtain the scaling ratio:

\[\frac{n^{2(k+1)}r_{k+1}^{2-q}} {n^{2k}r_{k}^{2-q}} = 1 \rightarrow \frac{r_{k+1}}{r_k} = n^{\frac{-2}{2-q}}\]

To find the length scaling ratio, we will minimize P with respect to $l^k$, at an arbitrary level k, and set the result to 0. Thus, we can find a formula for a Lagrange multiplier, using the formula above, and derive the scaling law.

So we have:

\[\frac{dP}{dl_k} = \frac{pl_k^{p-1}r_k^{q}}{n^k} + \Tilde{\lambda} n^k r_k^2 + 3\Tilde{\lambda_k} n^k l_k^2 = 0 \]

Solving for the Lagrange multiplier, we have:

\[\Tilde{\lambda_k} = \frac{-\frac{pl_k^{p-1}r_k^{q}}{n^k} – \Tilde{\lambda} n^k r_k^2}{3n^k l_k^2}\]

Substituting $\Tilde{\lambda}$, as calculated before:

\[\Tilde{\lambda_k} = \frac{-\frac{pl_k^{p-1}r_k^{q}}{n^k} + \frac{q r_k^2}{2n^{k}l_k^{1-p}r_k^{2-q}} }{3n^k l_k^2} = \frac{(\frac{q}{2} – p)pr_k^q l_k^{p-1}}{3n^{2k} l_k^2} = \frac{q-2p}{6} \frac{1}{n^{2k}l_k^{3-p}r_k^{-q}}\]

Since this is a constant, the denominator must be constant across levels. So

\[\frac{n^{2(k+1)}l_{k+1}^{3-p}r_{k+1}^{-q}}{n^{2k}l_{k}^{3-p}r_{k}^{-q}} = 1\]

In the case where p=1, we have

\[ \frac{n^{2(k+1)}l_{k+1}^{2}r_{k+1}^{-q}}{n^{2k}l_{k}^{2}r_{k}^{-q}} = 1\rightarrow (\frac{l_{k+1}}{l_k})^2 = n^{-2} (\frac{r_{k+1}}{r_k})^q\]

Substituting the scaling law for radius, we have:

\[ (\frac{l_{k+1}}{l_k})^2 = n^{-2} (n^{\frac{-2}{2-q}})^q \rightarrow \frac{l_{k+1}}{l_k} = n^{-1 – \frac{q}{2-q}} \rightarrow \frac{l_{k+1}}{l_k} = n^{\frac{-2}{2-q}} \]

We can test these calculations for our vascular networks calculation, where q = -4. Our scaling laws for radius and length are $\frac{r_{k+1}}{r_k} = \frac{l_{k+1}}{l_k} = n^{-1/3}$, as expected.

We will now attempt to repeat these calculations using a resistance formula specific to neuronal networks.

We think of the resistance to blood flow as the resistance due to the viscosity of the fluid. For neuronal networks, we can think of axons and dendrites as wires through which current is flowing. The resistance as the resistance to current flow through the “wire” due to intrinsic properties of the wire. The resistance is given by $R_k = \frac{\rho l_k }{A}$, where A is the cross-sectional area of the wire, and $l_k$ is the length of the segment at that level. $\rho$ is the intrinsic resistivity of the axon or dendrite, and we are assuming that $\rho$ is constant, meaning that the material is uniform. If we assume that the axons or dendrites are cylindrical, we can define the cross-sectional area as $\pi r_k^2$ for level k, so the resistance for level k is given by $R_k = \frac{\rho l_k }{\pi r_k^2}$.

Assuming that the branching ratio is constant, the number of branches at each level is $n^k$, and the total resistance at each level is $R_{k,tot} = \frac{\rho l_k }{\pi r_k^2 n^k}$. The net resistance is the sum across all levels, that is $R_{net} = \sum_{k=0}^N\frac{\rho l_k }{\pi r_k^2 n^k}$.

Our objective function for this case can be derived in a similar manner as in the general case, setting $\Tilde{C} = \frac{\rho}{\pi}$, setting p = 1, and q = -2, based on the constants and powers for our specific resistance equation. Thus, we have the objective function

\[P = \sum_{k = 0}^N \frac{l_k}{r_k^2 n^k} + \Tilde{\lambda} \sum_{k=0}^N n_k r_k^2 l_k + \Tilde{\lambda}_M M + \sum_{k=0}^{N} \Tilde{\lambda}_k n^k l_k^3 \]

To find the radius scaling ratio, we will minimize P with respect to $r^k$, at an arbitrary level k, and set the result to 0. Thus, we can find a formula for a Lagrange multiplier and derive the scaling law.

So we have:

\[\frac{dP}{dr_k} = \frac{-2l_k}{n^k r_k^3} + 2 \Tilde{\lambda} n^k r_k l_k = 0 \]

Solving for the Lagrange multiplier, we have:

\[\Tilde{\lambda} = \frac{1}{n^{2k}r_k^{4}}\]

Since this is a constant, the denominator must be constant across levels. So

\[\frac{n^{2(k+1)}r_{k+1}^{4}}{n^{2k}r_{k}^{4}} = 1\]

Thus, we can solve for the scaling ratio:

\[ \frac{r_{k+1}}{r_k} = (n^{-2})^{1/4} = n^{-1/2}\]

To find the length scaling ratio, we will minimize P with respect to $l^k$, at an arbitrary level k, and set the result to 0. Thus, we can find a formula for a Lagrange multiplier, using the formula above, and derive the scaling law.

So we have:

\[\frac{dP}{dl_k} = \frac{1}{n^k r_k^2} + \Tilde{\lambda} n^k r_k^2 + 3\Tilde{\lambda_k} n^k l_k^2 = 0 \]

Solving for the Lagrange multiplier, we have:

\[\Tilde{\lambda_k} = \frac{-\frac{1}{n^k r_k^2} – \Tilde{\lambda} n^k r_k^2}{3n^k l_k^2}\]

Substituting $\Tilde{\lambda}$, as calculated before:

\[\Tilde{\lambda_k} = \frac{-\frac{1}{n^k r_k^2} – \frac{1}{n^{k}r_k^{2}} }{3n^k l_k^2} = – \frac{2}{3n^{2k}l_k^2 r_k^2}\]

Since this is a constant, the denominator must be constant across levels. So

\[\frac{n^{2(k+1)}l_{k+1}^{2}r_{k+1}^{2}}{n^{2k}l_{k}^{2}r_{k}^{2}} = 1\]

Thus, substituting in the scaling ratio for radius, we can solve for the scaling ratio for length:

\[(\frac{l_{k+1}}{l_k})^2 = n^{-2} (\frac{r_{k+1}}{r_k})^{-2} = n^{-2} (n^{-1/2})^{-2} = n^{-1} \rightarrow \frac{l_{k+1}}{l_k} = n^{-1/2}\]

Note that these scaling laws are consistent for the theoretical predictions from our general formulas, for q = -2.

Some of the assumptions we have made for the purpose of these calculations are as follows:

- The current flow is constant across all levels of the network

- The axons and dendrites are cylindrical

- The material of the axons and dendrites is uniform and can be linked to a constant of specific resistivity

- The network has a fixed size

- The network is contained within a fixed volume

- The network is space filling

- The branching ratio is constant

Particularly in the case of the volume and space-filling constraints, and the constant branching ratio, it is unclear if a neuronal network has the same properties that we assume hold for vascular networks. In addition, it is unclear whether it is reasonable to assume that the current flow is constant. Thus, it might be worth reexamining these constraints and assumptions to add more biologically realistic and relevant ones.

Moreover, instead of focusing on this optimization problem of minimizing power loss, it might be more fruitful to examine a different optimization problem, such as minimizing the time for a signal to travel from one end to another end of the network.

These scaling laws give us some preliminary ideas to work with. We can try using image analysis techniques to measure length and radii of segments of axons and dendrites across levels in images and see whether information extracted from the data supports our theoretical conclusions.

References

Savage, Van M., Deeds, Eric J., Fontana, Walter. (2008). Sizing up Allometric Scaling Theory. PLOS Computational Biology.

Johnston, Daniel, Wu, Samuel Miao-Sin . (2001). Foundations of Cellular Neurophysiology. MIT Press.

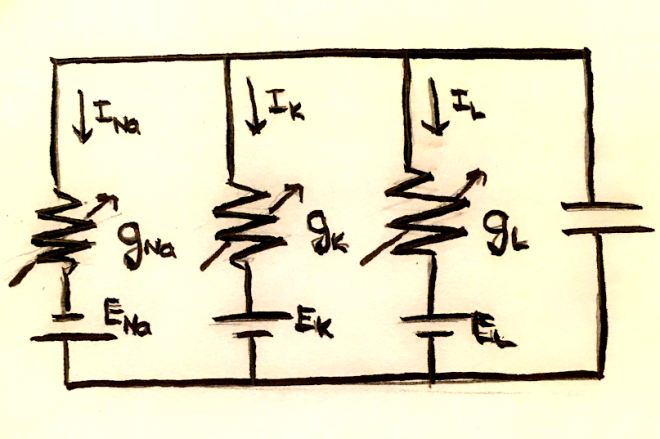

Because of charge conservation, the sum of the currents across the capacitor and each of the resistors must be 0. In mathematical terms, this is $C_m \frac{dV_m}{dt} = -\sum_i I_i$.

Because of charge conservation, the sum of the currents across the capacitor and each of the resistors must be 0. In mathematical terms, this is $C_m \frac{dV_m}{dt} = -\sum_i I_i$.